The Hubble Space Telescope was launched in 1990, and 35 years later scientists are still working to keep it operating. Image: NASA.

By Mariana Meneses

Modern observatories now collect far more data than any team of scientists can fully examine, shifting the central challenge of astronomy away from observation and toward interpretation. The key question is no longer what we can see, but how we decide which signals matter, how we extract meaning from vast datasets, and how we connect those signals to physical theories without being misled by noise, bias, or methodological choices.

Artificial intelligence has become central to this shift, for instance by allowing researchers to scan archival telescope images and reveal new objects and patterns that human observers never had the time, or the tools, to notice. At the same time, increasingly precise instruments, large-scale simulations, and flexible statistical methods are expanding what can be detected, while also making clear how sensitive scientific conclusions can be to the tools and assumptions used to interpret data.

This shift is illustrated clearly by a recent study entitled “Identifying astrophysical anomalies in 99.6 million source cutouts from the Hubble legacy archive using AnomalyMatch” (open access), that was published in December 2025 in the journal Astronomy & Astrophysics, by David O’Ryan and Pablo Gómez from the European Space Agency (ESA).

Reporting on the study, Space.com explains that the research was motivated by a practical problem in astronomy: the sheer volume of data collected by the Hubble Space Telescope. With millions of images accumulated over decades, it has become impossible for scientists to visually inspect all observations in search of unusual or unexpected objects.

The researchers developed an artificial intelligence model called AnomalyMatch, designed to scan archival telescope images and flag objects that look visually unusual. Rather than searching for known types of galaxies or stars, the system was trained to recognize patterns and identify cases that stand out from the norm, similar, the authors note, to how the human brain processes visual information. Using this approach, the model analyzed nearly 100 million small image sections from the Hubble Legacy Archive in under three days.

The AI identified around 1,300 anomalous objects, hundreds of which had never been documented before. Many of these objects do not fit neatly into existing categories and include galaxies caught in the process of merging, galaxies with large clumps of star formation, jellyfish-like galaxies with trailing streams of gas, and edge-on disks of material where planets may be forming. According to NASA and the researchers, this marks the first time a systematic, archive-wide search for astrophysical anomalies has been carried out using Hubble data.

By revealing hundreds of previously unknown and hard-to-classify objects, AnomalyMatch highlights the potential of AI-driven tools to guide future discoveries, especially as space telescopes continue to generate data at scales far beyond what humans alone can fully explore.

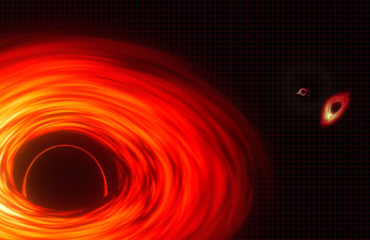

“Quasars’ Multiple Images Shed Light on Tiny Dark Matter Clumps”. Credit: NASA.

Other recent studies show that new technologies are also changing how astronomers measure the universe itself. In cosmology, many important quantities cannot be observed directly and must be inferred from complex signals. As telescopes and data-analysis tools improve, researchers are now able to revisit long-standing results using clearer observations and alternative approaches that rely less on earlier assumptions.

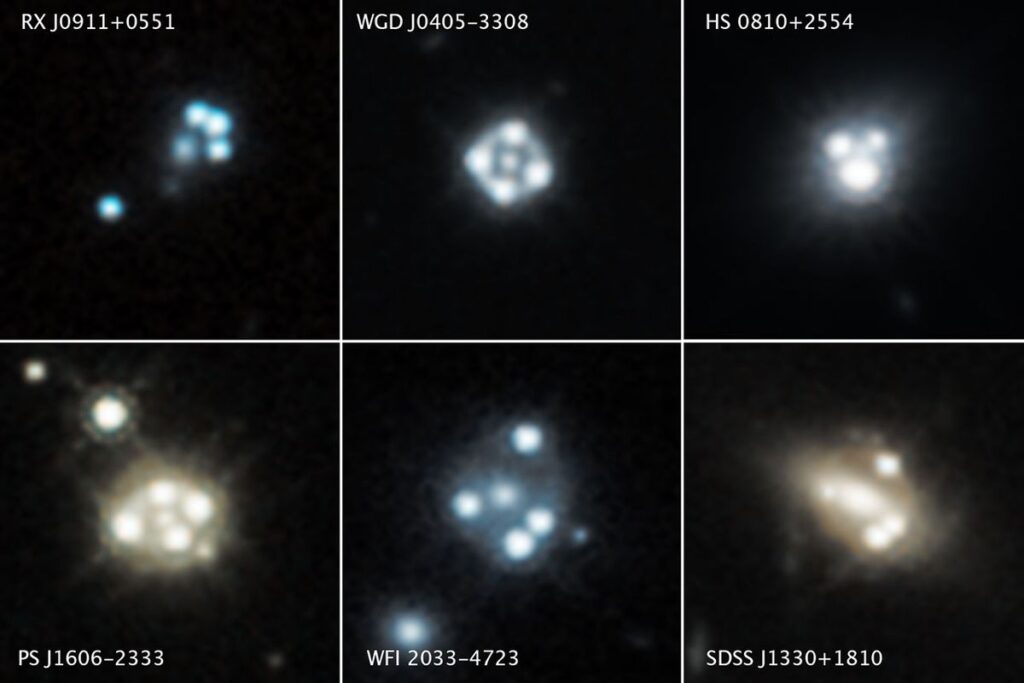

The study entitled “TDCOSMO 2025: Cosmological constraints from strong lensing time delays” was published in September 2025 in the journal Astronomy & Astrophysics, and authored by the TDCOSMO Collaboration. The collaboration is a group of scientists using a method known as time-delay cosmography to gauge absolute distances and the Hubble constant. Named after astronomer Edwin Hubble, the Hubble constant is the still-debated ratio of the velocity at which distant objects recede relative to their distances. The study reports new measurements of how fast the universe is expanding, based on observations of gravitationally lensed quasars.

NASA explains that gravitationally lensed quasars act as natural cosmic magnifying glasses. A quasar is a distant, extremely bright galactic core where a black hole accelerates superheated gas clouds. The powerful light created by these clouds is bent and split into multiple images by the gravity of another massive galaxy in the foreground. Because clumps of dark matter subtly distort the brightness and positions of these multiple images, astronomers can use lensed quasars to detect and measure otherwise invisible dark matter structures along the line of sight and within the lensing galaxy itself. (For more on dark matter, see Novel Theory Explains Both Dark Matter and Dark Energy. Will a New Space Telescope in 2027 Shed Light on the Enduring Mystery? in this edition).

Comprising about one-quarter of the mass and energy of the universe, dark matter is a theorized form of matter that doesn’t interact with light or electromagnetic radiation and is detectable only by the effects of its gravity.

The research is motivated by the long-standing Hubble tension, a term applied to different methods for measuring the universe’s expansion rate that give conflicting answers, depending on whether they probe the distant early universe or the nearby late universe. If this discrepancy reflects real physics rather than hidden measurement errors, it will imply that current cosmological models are incomplete. The TDCOSMO team aims to clarify whether the tension persists when the expansion rate is measured using a method that is independent of both early-universe observations and traditional techniques.

To do this, the researchers use time-delay cosmography techniques based on strong gravitational lensing. As massive galaxies bend the light from more distant quasars, multiple images arrive at Earth at slightly different times. By measuring these time delays and carefully modeling how mass is distributed in the lensing galaxies, astronomers can infer the universe’s expansion rate using geometry and gravity alone. The team analyzed eight lensed quasar systems and combined them with data from earlier lens surveys, while explicitly accounting for uncertainties in galaxy mass distributions.

The results indicate an expansion rate that is closer to values obtained from late-universe measurements than to those inferred from the cosmic microwave background, while remaining statistically independent of both. The agreement across different lens samples strengthens the conclusion that the Hubble tension cannot be easily dismissed as a problem with any single observational method. At the same time, the authors emphasize that uncertainties, especially related to how mass is distributed inside lensing galaxies, still limit the precision of the measurement.

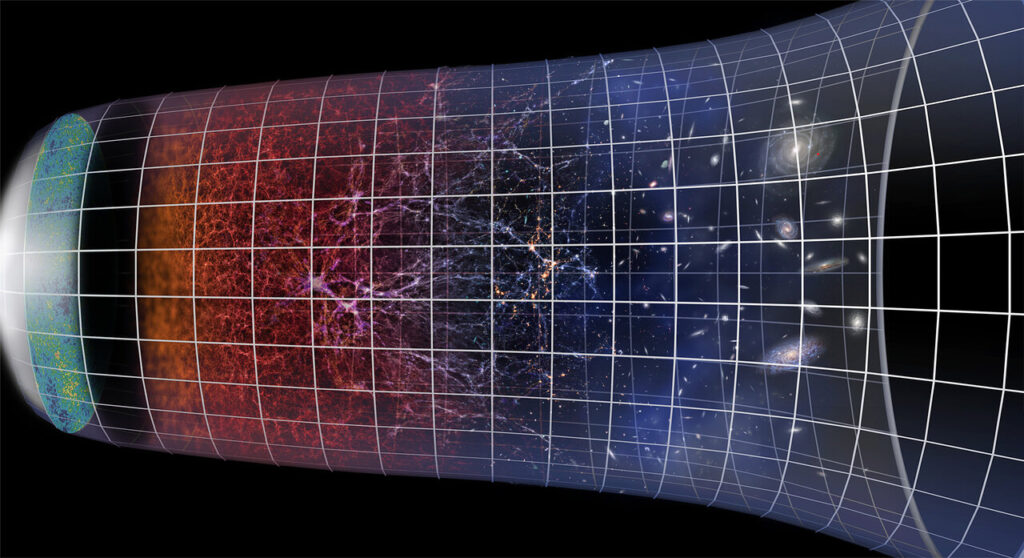

The cosmic microwave background is the microwave radiation that remains at the earliest measurable limit when the universe was about 370,000 years young and the hot, dense plasma from the Big Bang had not cooled sufficiently to form particles and atoms.

In sum, the TDCOSMO 2025 study provides independent support for the idea that the Hubble tension reflects a real and unresolved feature of the universe rather than simply a measurement error. While the work does not identify the underlying cause, it narrows the range of viable explanations and highlights the need for larger samples and improved modeling. As more lensed systems are observed with next-generation instruments, time-delay lensing is expected to play an increasingly important role in testing whether new physics is needed to explain cosmic expansion.

Simon Birrer – TDCOSMO H0 results with more data and fewer assumptions | Cosmology Talks

Recent advances in numerical simulations now allow previously simplified processes to be modeled in greater detail, enabling tests of whether subtle physical effects in the early universe could influence later inferences about cosmic expansion. In other words, disagreements about how fast the universe is expanding may trace back to small physical effects in the early universe that were previously too difficult to model accurately.

A study entitled “Hints of primordial magnetic fields at recombination and implications for the Hubble tension” was published in the journal Nature Astronomy (not open access) in December 2025 by Karsten Jedamzik, from the University of Montpellier, and co-authors. The study tests the hypothesis that very weak magnetic fields created in the early universe could have subtly sped up how matter and light separated after the Big Bang, affecting later measurements. The researchers used detailed computer simulations that model both cosmic magnetic fields and how light interacted with matter in the young universe, and then compared the predictions with multiple sets of astronomical observations.

They found that the data slightly to moderately favor the presence of extremely weak primordial magnetic fields, which also lead to a higher expansion rate more in line with late-universe measurements. In consequence, if confirmed by more precise observations in the future, these results would suggest that faint magnetic fields from the early universe could play a real role in cosmic evolution and might even explain the origin of the magnetic fields evident in today’s galaxy clusters.

This graphic shows the evolution of the Universe, from the Big Bang to the present day. It indicates the growth of the Universe through cosmic expansion and the growth of galaxies and galaxy clusters. The Universe is almost 14 billion years old. Credit: ESO/M. Kornmesser

Another line of research concerned with the Hubble tension is investigating whether the tension arises from how cosmic expansion is described in the first place. Instead of introducing new physical effects, some studies reanalyze existing observations using more flexible methods that place fewer assumptions on how the universe’s expansion has changed over time.

A study entitled “The Hubble Tension Resolved by the DESI Baryon Acoustic Oscillations Measurements” was published in The Astrophysical Journal Letters in November 2025, by X. D. Jia from the School of Astronomy and Space Science, Nanjing University, and co-authors. The paper analyzes recent cosmological data from the Dark Energy Spectroscopic Instrument (DESI) and complementary observations of exploding stars known as Type 1a supernovae, which are stellar explosions that occur when a white dwarf star grows too massive and undergoes a runaway thermonuclear blast.

The research is motivated by two closely related challenges to the standard cosmological model: the persistent disagreement between early-universe and late-universe measurements of how fast the universe is expanding, and recent DESI results suggesting that dark energy may not behave as a constant over time. Rather than treating these as separate problems, the authors ask whether both discrepancies could be explained within a single physical framework involving evolving, or dynamical, dark energy. (For more on dynamical dark energy, see The Quantum Record’s article The Mysteries of Dark Energy Deepen: Does it Expand the Universe at a Constant or Varying Rate? in this edition).

Theorized to comprise about two-thirds of the energy and mass of the universe, dark energy is not directly measurable but is detected by its effects that appear to push physical objects apart.

Instead of assuming a specific mathematical form for dark energy, the researchers divide cosmic history into intervals and allow the properties of dark energy to be determined independently in each category. From these results, they derive the universe’s expansion rate directly from the fundamental equations of cosmology, without relying on a predefined model for dark energy behavior.

The analysis reveals a clear trend: the inferred expansion rate is higher when measured using nearby cosmic data and gradually decreases when inferred from more distant, earlier epochs of the universe. This smooth transition bridges the gap between local measurements and those derived from the cosmic microwave background. At the same time, the results show that dark energy’s influence appears to change with cosmic time, in a way consistent across multiple datasets and analysis choices.

When observational data arrives as massive file releases, abundance itself becomes a barrier: too much data, too fast, for human sense-making to keep up. Image: Gerd Altmann, via Pixabay.

Taken together, these studies suggest that astronomy in 2026 is confronting a new kind of limit, not in how much of the sky we can observe but in how confidently we can extract meaning from what we already record. Tools like AnomalyMatch show how artificial intelligence can transform vast archives derived by sources like the Hubble telescope from static collections into active datasets, surfacing unusual objects simply by having the capacity to look exhaustively.

At the same time, some of the field’s most important conclusions, such as the universe’s expansion rate, are not directly observed but reconstructed through layers of modeling and inference. Methods ranging from time-delay gravitational lensing to early-universe simulations and DESI reanalysis highlight how sensitive results can be to assumptions and analytical choices. The central lesson is not the sudden confirmation of new physics, but a shift in how discovery works: progress increasingly depends on how we search, model, and rigorously test our interpretations, not just on collecting more data.

Craving more information? Check out these recommended TQR articles:

- Mind Reading Technology Connects Brains to Machines

- Where Do I End and Where Does the Machine Begin?

- AI and the Human Brain: From Mind-Reading AI to a Simulated Conversation with Einstein

- Restoring Hope: AI Breakthroughs Transform Lives of Paralyzed Individuals

- The Most Intimate Data: Accessing Another Human’s Thoughts

We would appreciate your feedback on The Quantum Record and similar content.

Have we made any errors?

Please contact us at info@thequantumrecord.com so we can learn more and correct any unintended publication errors. Additionally, if you are an expert on this subject and would like to contribute to future content, please contact us. Our goal is to engage an ever-growing community of researchers to communicate and reflect on scientific and technological developments worldwide in plain language.