Learning from nature: the era of human-like robots begins.

“The bio-inspired sensor skin developed by University of Washington and UCLA engineers can be wrapped around a finger or any other part of a robot or prosthetic device to help convey a sense of touch”. Photo: UW News.

As humans strive to build ever more efficient and powerful technology, we turn increasingly to nature for inspiration. Mimicking nature’s processes allows us to optimize artificial operations and increase processing speed while reducing energy consumption. From chips based on the architecture of the human brain, to flexible robotic ‘skin’ tissue, this is the era of humanoid robots.

Human bodies are fascinating mechanisms. The architecture of the brain is particularly influential in developing new technology. Why? Our brains have astonishing computing capacity. Highly energy efficient and performing multiple computations simultaneously, a brain has “superior flexibility, generalizability, and learning capability than a state-of-the-art computer”, according to Dr. Liqun Luo, professor of neurobiology at Stanford University. And it does all that using no more energy than a 20-watt lightbulb.

A conventional digital computer works one step at a time, isolating memory storage from its main processors in each step.

In other words, in traditional chips, calculation and memory are separated so that for each computation the chip must shuttle data (represented as ones and zeroes) back and forth between locations in the central processing unit (CPU). This is a system that naturally becomes more energy-intensive as AI algorithms become increasingly complex.

The brain is far more efficient than that. Inside our skulls, each neuron serves as both a calculating device and a memory device, with no need to shuttle data back and forth from memory to CPU. Unsurprisingly, researchers have been trying for some time to design a chip based on the brain’s architecture.

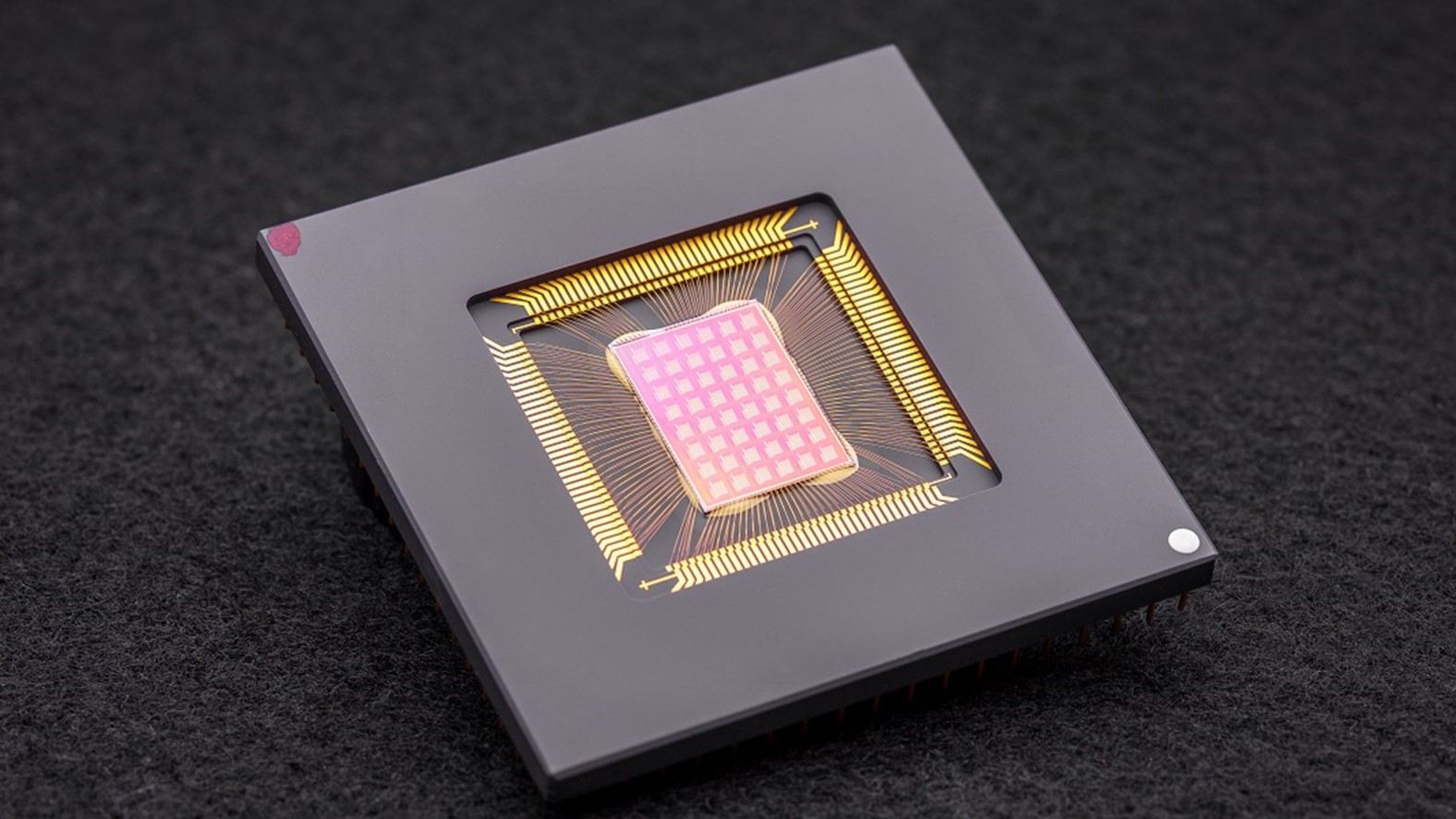

Compute-in-memory, or CIM, chips are based on the brain’s architecture. Image: Singularity Hub

These chips are called ‘compute-in-memory’, or CIM, chips.

They were designed to compute and store memory at the same site, and they promise higher efficiency and lower energy consumption. A new and improved model was recently presented in a 2022 paper published in the journal Nature by Dr. Weier Wan and co-authors.

Their model, NeuRRAM, solves previous CIM limitations – such as dealing with multiple different AI tasks which now impair its integration into smartphones and other everyday devices – by simultaneously delivering high energy efficiency, versatility, and accuracy. The chip performed exceptionally well in the tests and tackled multiple AI tasks, such as reading hand-written numbers and decoding voice recordings, with over 84 percent accuracy.

“Having those calculations done on the chip instead of sending information to and from the cloud could enable faster, more secure, cheaper, and more scalable AI going into the future, and give more people access to AI power,” said Dr. Philip Wong, professor of electrical engineering at Stanford University who co-authored the paper.

Furthermore, if these neural-based (or “neuromorphic”) computing devices are constructed from organic materials, they could potentially allow adaptive connections with biological mechanisms, such as brain-computer interfaces. They might also introduce a range of new prosthetic devices inspired by biology, given how well these organic materials can conform to the contours of curved or rough surfaces. This is the conclusion made in a 2021 paper published in the Annual Review of Materials Research, by Dr. Aristide Gumyusenge, from MIT, and co-authors.

By using soft, flexible organic materials that can operate like the brain’s neurons, these devices can send and receive both chemical and electrical signals. “Your brain works with chemicals, with neurotransmitters like dopamine and serotonin. Our materials can interact electrochemically with them,” says Dr. Alberto Salleo, professor of materials science and engineering at Stanford who co-authored the 2021 study.

To Francesca Santoro, an electrical engineer at Aachen University in Germany, the ultimate goal is “to design electronics which look like neurons and act like neurons.” One day, these organic neuromorphic computer chips could be implanted into people’s brains, allowing them to operate machines (e.g. an artificial arm, or a computer monitor) with only the power of thought.

Most deep learning applications today are based on varying types of neural networks, which model the firing of neurons in the brain. Recent work by Dr. James Whittington, for instance, shows that using a special type of neural network known as a transformer can greatly improve the ability to mimic the sorts of computations carried out by the brain. These include one particularly challenging type of computation, which is to map locations and track spatial information in much more efficient ways.

“We’re not trying to re-create the brain, but can we create a mechanism that can do what the brain does?” asked Ha, a Ph.D. candidate in mechanical engineering at MIT.

Robot hand with fine motor control delicate enough to hold a light bulb. Image: Wikipedia .

But the brain is not the only organ whose inner workings researchers aim to reproduce in the lab.

Aside from how we process sensory inputs in our heads, we humans also have incredible machinery built into our bodies for receiving sensory input from the external environment through the ears, mouth, nose, and skin.

The human skin has an amazing ability to detect and respond to the outside world: it is soft, stretchy and has millions of nerve endings perfectly designed to sense changes in temperature and the physical characteristics of the objects it touches. The versatility and adaptability of living skin is something hard to reproduce in a synthetic version. But new research is coming closer to building an electronic skin, or e-skin.

Recent improvements in materials and electronics have allowed researchers to develop a skin system, with a skin-like base that can flex and stretch, a power supply, and various sensors, as well as paths connecting sensor data to a central processor. Although touch and temperature sensors are not exactly new technology, Dr. Wei Gao, professor of medical engineering at Caltech, says he and his team wanted to go beyond those, to incorporate the ability to detect chemicals.

“We wanted to give it powerful chemical-sensing capability”, he said. Dr. Gao co-authored the 2022 study led by Dr. You Yu and published in the journal Science Robotics. They created a robotic sensor capable of detecting a wide range of hazardous materials, such as explosives, pesticides, and infectious pathogens such as SARS-CoV-2.

There are many possible future applications for this type of technology.

“This fully printed human-machine interactive multimodal sensing technology could play a crucial role in designing future intelligent robotic systems and can be easily reconfigured toward numerous practical wearable and robotic applications,” they say in the paper.

The e-skin is an integration of soft materials, sensors, and flexible electronics, and looks like a transparent bandage with metallic designs embedded in its surface. Although there are still many issues to address before this technology becomes commercial, among them the problem of durability, there are many potential applications for these devices that may one day let humans remotely control robots and “feel” the signals they detect.

Humans are perhaps the most complex organisms that nature has produced after millions of years of evolutionary history (that we know of, anyway). It’s not news that we take lessons from nature to develop technology. But as knowledge of the inner workings of our bodies, and especially the brain, advances, we seem to get closer to the point where we will be able to fully integrate humans and machines. Does this prospect make you more excited, or more concerned?