The mission statement of OpenAI, the company that is commercializing its generative AI products like ChatGPT.

By James Myers

“From here on out, the safe use of artificial intelligence requires demystifying the human condition. If we can’t recognize and understand how AI works – if even expert engineers can fool themselves into detecting agency in a ‘stochastic parrot’ – then we have no means of protecting ourselves from negligent or malevolent products. [Joanna Bryson, “One Day AI Will Seem as Human as Anyone. What Then,” Wired Magazine, June 26, 2022)

Philosophy is a waste of time. Intelligence is an algorithm that software engineers can encode, and their code will make the algorithm smarter than the human programmers. Who needs philosophy (which means “love of wisdom” from the Greek roots of the word) when technology will deliver intelligence and wisdom that is superior to our limited human minds?

Portrait of Albert Einstein for the 1921 Nobel Prize in Physics (which was not for his Theory of General Relativity). Image: Wikipedia

Or so it might seem to some technologists who think that intelligence is programmable, and that intelligence is a byproduct of physical matter and energy. After all, if the famous E=mc2 that the genius of Albert Einstein’s intelligence uncovered in 1905 tells us all about energy and matter, surely cracking the intelligence code is only a question of finding some more variables that operate within the physical equation.

Isn’t it? Physical matter appeared in the universe before intelligence, isn’t that obvious? But how can we be so sure of that: what evidence is there for it, and maybe more importantly, what if it’s the other way around? If intelligence predates physical matter, the intelligence code would require a whole new equation that could well place it beyond the reach of human programmers – and that would be mind-blowing wouldn’t it? To crack the intelligence code, we would need an Einstein capable of decoding himself, and not just the physical matter that surrounds him.

But there are those who think otherwise.

Beginning at 12:32, in response to a question in the All-In Podcast about the possibility of an “arms race for data” among four or five large technology companies, OpenAI CEO Sam Altman stated, “I don’t think it will be an arms race for data because when the models get smart enough at some point it shouldn’t be about more data at least not for training, it may matter for data to make it useful. The one thing that I have learned most throughout all of this is that it’s hard to make confident statements a couple of years in the future about where all of this is going to go and so I don’t want to try now. I will say that I expect lots of very capable models in the world and it feels to me like we just stumbled on a new fact of nature or science or whatever you want to call it which is like, we can create … you can … like I mean I don’t believe this literally but it’s like a spiritual point, you know, intelligence is just this emergent property of matter and that’s like a rule of physics or something. So people are going to figure that out.”

In this interview, OpenAI CEO Sam Altman states: “It feels to me like we just stumbled on a new fact of nature or science or whatever you want to call it which is like, we can create … you can … like I mean I don’t believe this literally but it’s like a spiritual point, you know, intelligence is just this emergent property of matter and that’s like a rule of physics or something. So people are going to figure that out.”

My question to Sam is, which people are going to figure out intelligence: the people at OpenAI, Microsoft, Google, Meta, or any of the other big technology companies? What are you or they going to do with the intelligence code, when it’s figured out – or has the thinking not yet gone that far?

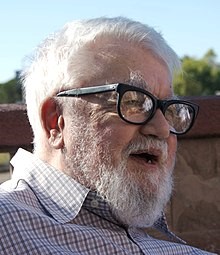

Computer scientist John McCarthy, 1927-2011. Image: Wikipedia

The thing about intelligence is there’s no evidence that the spontaneous creative ability of the human mind can reside in a machine. The human mind can imagine things that never existed, whereas a machine’s calculations are limited to combinations of things that exist. In 1956, computer scientist John McCarthy coined the term “artificial intelligence,” but it wasn’t because he believed a machine was capable of intelligence.

McCarthy’s use of the word “intelligent” was a marketing ploy – which we’ll say more about shortly – but over nearly seven decades since it was introduced, the confused association of human intelligence with computer algorithms has become deeply and globally entrenched. The term “AI” is now seen and used everywhere, and investors are betting heavily that AI is not only equivalent to human intelligence but that it will make what’s in our heads obsolete.

Can we please find a word for what goes on inside a computer, other than “intelligence”?

The problem is that there’s no generally accepted definition of the incredibly variable intelligence that exists in our heads. Regardless, intelligence is something that many companies are seeking to capitalize on and profit from. For instance, the mission of OpenAI, the maker of large language model chatbot GPT-4, “is to ensure that artificial general intelligence – AI systems that are generally smarter than humans – benefits all of humanity.” The mission statement begs the question: how can a human program a machine to be more intelligent than the human programmer? Some questions are, perhaps, best left unanswered in the pursuit of profit.

OpenAI used to be a non-profit company, but abandoned the lofty ideal in the quest for money to build artificial general intelligence (AGI). The company’s major investor is now Microsoft, whose $10 billion investment could yield a return of up to $1 trillion; since OpenAI doesn’t disclose its finances, we don’t know whether a lower limit was promised to Microsoft. Microsoft already makes a lot of money: its fiscal 2023 revenue was $212 billion and net income was $72 billion.

The Death of Socrates, 1787 painting by Jacques-Louis David, at the Metropolitan Museum

Maybe a bit of philosophy would help in the quest for real (not artificial) intelligence.

So far in human history, there’s been no better science than philosophy for understanding the meaning of time. Philosophy is a most challenging “science of science” because it tends to lead to more questions than answers, but then that’s the way the universe works: Heisenberg’s Uncertainty Principle and Gödel’s Incompleteness Theorems guarantee there will always be more questions than answers. At least a bit of philosophy is necessary, when none of us wants to lead a life without meaning.

Philosophical questions can be mind-blowingly difficult, which is why many people naturally prefer to avoid them. The questions that Socrates asked thousands of years ago still linger, long after he was put to death for annoying the rulers of Athens. Philosophical questions can generate endless debate and disagreement. Wouldn’t it be wonderful if an algorithm could put an end to all that philosophical trouble?

A bit of philosophy can, however, often make time go more smoothly. Because of its sometimes troubling questions, philosophy urges us to dig just a bit deeper, and in that digging to find some common ground in our shared human experience. As any human knows, survival and procreation over time – sometimes for 80 years or more – as a biological being in a hostile environment is both a difficult and wondrous experience that’s best lived with a helping hand. How could a machine made of metal and wires, without parents or children and incapable of dreaming, possibly understand the wonders of biological living? What does the machine care about the meaning of time, as long as it receives its steady diet of electricity?

Let’s think for a moment about two of the fundamental bases of intelligence, and their relationship to time. Doesn’t intelligence have something to do with understanding the sequences of cause and effect in time, such that intelligent action leads to better effects? And what about reason – that thing that intelligent people tend to exercise, when they weigh options and justify the one with the best outcomes in cause and effect over time?

Prometheus watches Athena endow his creation with reason. Painting by Christian Griepenkerl, 1877, from Wikipedia. The name “Prometheus” is said to derive from the Greek pro– (before) and manthano (intelligence), and legend holds that Prometheus endowed the power of creativity on humans. Image: Wikipedia

Time seems to fly especially quickly now, when we’re surrounded by technology and data. So it’s worth pausing for a bit of time to think this intelligence thing out before we attempt to stick it in a machine that could turn out like Dr. Frankenstein’s monster, quite unintendedly. Do we have the power of the Titan Prometheus, to give birth to a machine replica of the brains in our heads when we don’t even understand how our brains work?

Pitfalls on the Path to AGI

Although it might seem like ages have passed, it was only a little less than two years ago that Google terminated Blake Lemoine, who worked in the company’s Responsible AI group, for claiming that Google’s LaMDA large language generator had become sentient. The Washington Post broke that story in June, 2022, which was after Google had already terminated heads of its Ethical AI division, Margaret Mitchell and Timnit Gebru. All of that happened before OpenAI introduced ChatGPT to global fanfare in November 2022, and the debate that has raged since then about the future of “generative artificial intelligence” which is now being challenged by lawsuits by human creators.

Giant technology companies are in a race to extend the reach of AI, with Google’s parent company Alphabet making the most recent move.

AI has taken a giant step forward in its ability to influence human behaviour, as Microsoft now embeds Copilot in its applications, advertising company Google has just announced it is beefing up its Gemini and embedding it in as many of its globally pervasive platforms as it can, and OpenAI is looking to offer more of its GPT for free (as it monetizes its use).

Where are the philosophers in the race to develop AGI?

Of the 162 positions that OpenAI is now hiring as of the date of this writing, none contain the words “ethics” or “philosophy.” The prominent word in many of the available jobs is “engineer”, and the search function on OpenAI’s website reveals the absence of the words “philosophy” and “philosopher” anywhere therein. Fine then, kudos to you if you somehow end up programming intelligence. Please tell us first, though, what price you’ll put on it for the rest of us feeble humans who will be at its service.

Intelligence is too important to be a marketing gimmick.

As we have noted, when John McCarthy coined the term “Artificial Intelligence” in 1956, it wasn’t because he thought the machine could be smart. He called the machine an “automaton,” which is the exact opposite of smart. “Artificial Intelligence” was a marketing ploy to attract funding for research.

So enough now of the marketing ploy, please. Maybe McCarthy was onto something when he called the machine an “automaton,” which honoured his own intelligence by acknowledging that the machine would be at his command and not the opposite, which is apparently what we now seek.

Maybe the most important step to understanding what intelligence is, is to stop borrowing words that describe human capabilities and applying them to machines: in other words, stop anthropomorphizing. If we used different words for machine functions, maybe we would see more clearly the difference between us and the machines that we create, and appreciate our intelligence more than we now do.

As Luciano Floridi and Anna C. Nobre of Yale University and the University of Bologna write in their insightful paper, Anthropomorphising machines and computerising minds: the crosswiring of languages between Artificial Intelligence and Brain & Cognitive Sciences, “perhaps the most problematic borrowing came with the generation of the label of the field itself: ‘Artificial Intelligence’. John McCarthy was responsible for the brilliant, if misleading, idea. It was a marketing move, and, as he recounted, things could have gone differently.”

It’s worth quoting at length from Floridi and Nobre’s paper what McCarthy said about his AI marketing ploy, because being the father of AI maybe there’s something important in his perspective on the term he gave birth to:

“Excuse me, I invented the term ‘Artificial Intelligence’. I invented it because we had to do something when we were trying to get money for a summer study in 1956, and I had a previous bad experience. The previous bad experience [concerns, McCarthy corrects himself and says] occurred in 1952, when Claude Shannon and I decided to collect a batch of studies, which we hoped would contribute to launching this field. And Shannon thought that ‘Artificial Intelligence’ was too flashy a term and might attract unfavorable notice. And so, we agreed to call it ‘Automata Studies’. And I was terribly disappointed when the papers we received were about automata, and very few of them had anything to do with the goal that at least I was interested in. So, I decided not to fly any false flags anymore but to say that this is a study aimed at the long-term goal of achieving human-level intelligence. Since that time, many people have quarrelled with the term but have ended up using it. Newell and Simon and the group at Carnegie Mellon University tried to use ‘Complex Information Processing’, which is certainly a very neutral term, but the trouble was that it didn’t identify their field, because everyone would say ‘well, my information is complex, I don’t see what’s special about you’.” (From The Lighthill Debate (1973), with punctuation added for readability).

What is our mindset on time – have we lost our minds, literally??

Do we want AGI to be smarter than humans because when we look at ourselves, like looking in a mirror, we don’t like the way we see our future unfolding in that reflection? The future does look quite grim, judging from the daily news headlines. Is our mindset such that we don’t think we can make ourselves better, but we can make a better machine?

The thing about the future is that it’s ours to make or break! It really is just a question of choosing our own mindset on time: to make or to break the future. Physical matter and energy will do what our minds tell it to do when we put our bodies to the task, and history shows that 7.8 billion human minds have the power to transform E=mc2 and change the physical structure of the entire planet Earth.

We hold in our hands the power to transform Earth and make whatever meaning of time we choose. Image by Daniel Bruyland of Pixabay

Right now, it seems our collective mindset is to break the future. Wars are erupting, ancient hostilities are being re-litigated, hatred is replacing hope, tyrants have a good proportion of the world’s people under their thumbs, and a mockery is being made of government of the people, by the people, for the people.

But that’s all just a product of our mindsets, isn’t it? To understand a mindset, stand in front of a mirror and imagine, as you look at yourself, time unfolding in the background. As you witness time unfolding in that reflection, do you think you might have maybe even the tiniest bit of power to make time just a bit better? Imagine what would happen if 7.8 billion of us humans looked in the mirror and exercised our imaginations for a better future?

Imagine what it would be like, with the power of the machines at our human disposal to build a better future of the people, by the people, and for the people. The machines are the tools of the people, and the people have the power to make meaning out of time.

Let’s start exercising people power. Let’s have agency in our own human future. Let’s stop calling the machine “intelligent.”