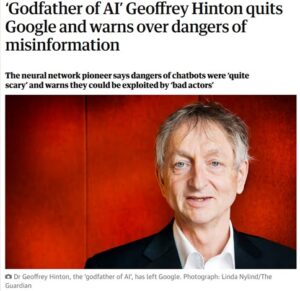

The Guardian reported the resignation of Dr. Geoffrey Hinton from Google (May 2, 2023)

By James Myers

The resignation of Geoffrey Hinton from Google six months ago, citing his concerns about digital misinformation and an existential risk that truly intelligent machines could pose to humanity, made worldwide headlines.

That’s because Dr. Hinton was not only an industry insider, but he has been hailed as the “godfather of AI” for his pioneering work in creating effective artificial neural networks that gave birth to today’s rapidly-expanding field of machine learning. The citation for his election as a Fellow of the Royal Society in 1998 recognized Dr. Hinton as “internationally distinguished for his work on artificial neural nets, especially how they can be designed to learn without the aid of a human teacher. This may well be the start of autonomous intelligent brain-like machines.”

After a decade working with Google Brain, a deep learning artificial intelligence research team within Google, Hinton left the company that dominates the worldwide internet of data so that he could speak his mind freely about what our future will look like if we go further down the path of machine learning that we are now on.

Preserving the Ability to Speak One’s Mind

The Quantum Record’s October feature, “What Are We Training AI to Do With Our Data?”, was an eye-opener to investigate. I think we all know that our data is being collected, and that our privacy is under threat, but I didn’t realize how interconnected our data has become. I was surprised to learn how much information on my life that advertisers and others can piece together from the thousands of cookies on my computer and all the websites I search for and visit.

With the vast amount of our data being applied to machine learning, how much will the machines learn about us, and how soon? And what do the machines’ programmers want to do with all that knowledge about us?

Since the internet came into widespread use, beginning in the mid-1990s, privacy has always been an issue, but the risks to our privacy are now multiplying more rapidly by the day. It’s clear to see that data breaches are everywhere. Not even the biggest companies with the best cyber-defences that money can buy are immune to criminal intrusion. But even if our most sensitive data is not breached by criminals, we still freely grant a great deal of information on ourselves to legal and legitimate online businesses and app-makers.

Privacy policies, often requiring our full consent to search the web or use common and needed apps, are impenetrably pages-long, written in language that would challenge even a lawyer to fully understand their implications, and are seldom read before we users agree to them.

I am as guilty as anyone in failing to review the user agreements I agree to – do I have the time to find, then read and understand, the dense policies for all the online applications I use?

While many people are concerned about their privacy, I hear two common excuses for inaction. One is along the lines of, “There’s nothing interesting about me that they’ll find anyway,” and the other is, “I guess that’s the price we have to pay for free access to the app.”

Knowing that in 2022 Google made a profit of $60 billion for itself by leveraging our data, I spent some time with Google’s privacy policies. It was interesting to read the extent to which the data we freely grant the company is used not just by Google but shared with its advertising clients. We – and that means all of us, whether we think we’re important or not – should be concerned to know the answer to the question, “What are we training AI to do with our data?” Our human future, as Dr. Hinton suggests, may hinge on the answer.

The buying and selling of our data is a $200 billion industry for the data brokers, but only they know how the industry operates. With this in mind, there are two changes in data-gathering practices that could help preserve for us humans at least some future choices for the data that we generate during the miracle of biological life.

- Changing the Default for Privacy Policy Consents to Highlight Specific Key Privacy Risks:

Instead of the common default setting requiring users to accept or reject all the privacy policies, the default could instead be set to give us an option to either accept or reject specific key provisions of the privacy policy. Do I want to provide my location data to a company I have no direct relationship with, in a third-party cookie? No, thank you. Do I want to share my location data for use in navigation software? Yes, please. This would, of course, be a very difficult change to agree on, given the very deeply vested economic interests that benefit from the current everything-goes default. It’s not difficult to imagine that they would receive less free-ranging consent than they now enjoy. - An International Standard Framework for Privacy Policies:

Within the framework, businesses could still tailor policies for their specific circumstances and needs, but they would require clear disclosures of and consents for higher-risk data. There are well-established precedents for contractual frameworks that are widely-followed in international finance and many other business areas. Is there any reason why privacy policies shouldn’t be similarly set out, within a framework that would ensure users clearly understand its key elements? The risk-based approach to disclosures could be modelled in the same way as the three tiers of risk that the European Union’s evolving AI Act assigns to different categories of AI. Risk ratings could be assigned to different types of data collection: for example, a lower-risk could be assigned to first-party cookies, a higher risk to third-party cookies, and the highest risk to biometric data like our faces and voices – with increasingly rigorous disclosures and consents required for higher-risk collection practices.

Can we imagine the ways that data harvesting would change, with these two safeguards in place to inform the data-generating public?

Are these two provisions unfair, in any way, to either the data-generators or data-gatherers? If so, then explain how the proposed disclosures would be any more unfair to one side or the other than the present inequality?

Fairness is something that humans care about – so much so that bloody wars erupt when one group of humans feels unfairly treated by another group. Do the machines care about fairness?

What are we teaching the machines about us?

If the machines learn so much that algorithms can provide their programmers with an unfair measure of power to predict and manipulate our actions, how will they judge the billions of us humans who don’t know how to program an algorithm or lack access to a powerful AI?