Image: Pic_Panther, on Pixabay.

By Mariana Meneses

Voice cloning technologies use artificial intelligence to generate new speech that is recognizably tied to a specific individual. While earlier systems required hours of recorded audio and produced limited realism, recent advances allow convincing clones from only seconds of input. This rapid shift has enabled assistive technologies for people who have lost their voices, restoring their ability to create content and communicate, and it has expanded commercial and educational applications.

The increasing ease and realism of voice cloning is also fueling the spread of audio “deepfakes” for deception, impersonation, fraud, extortion, and other crimes. (For more on deepfake risks, see The Quantum Record’s August 2025 feature Rise of Virtual Reality Tech Increases Risks of Entering AI’s Third Dimension, and the Need for Immersive Rights.)

This growing tension is already visible beyond research and into law and public action. In Europe, Denmark has proposed amendments (pdf) to copyright rules that would give individuals stronger control over the use of their voice and likeness in AI-generated deepfakes, requiring consent for realistic imitations and extending protection even after death. At the same time, high-profile figures are moving to secure similar protections through existing legal tools: in April 2026, popstar Taylor Swift filed trademark applications covering specific uses of her voice and image, seeking to establish clearer boundaries around consent and prevent AI-generated imitations that are “confusingly similar.”

Together, these developments suggest that as voice cloning becomes technically easier, societies are beginning to treat the voice less as a neutral signal and more as a form of identity that requires explicit ownership, authorization, and protection.

In “What I learned while cloning my own voice”, journalist and co-host for the New York Times podcast Hard Fork, Casey Newton describes his experience experimenting with AI voice cloning to create an audio version of his newsletter, Platformer. Early attempts, first using tools like Play and later HeyGen, produced unconvincing results, but newer models such as ElevenLabs’s “Flash v2.5” significantly improved quality. After initially resisting the idea due to the effort required to record training data, he ultimately created a “professional voice clone” by recording hours of his own speech in a tone matching his writing style. Newton says that the result was strikingly realistic at the level of voice timbre, though still imperfect in rhythm, emphasis, and pronunciation, especially with acronyms, and sometimes perceived as monotonous.

The voice clone enables Newton to launch an audio version of his newsletter without adding the effort of daily recording, expanding how readers can engage with his work. While he acknowledges potential downsides, such as emotional flatness and the risk of audience discomfort, he frames the experiment as a way to extend his capabilities rather than replace human creativity.

Newton’s experience reflects a broader pattern identified in recent research on how voice cloning is being deployed and understood.

The article entitled “A systematic literature review on AI voice cloning generator: A game-changer or a threat?” was published in the Journal of Emerging Technologies, in 2024, by Genesis Gregorious Genelza, from the University of Mindanao Tagum College, in the Philippines.

According to the literature review, AI voice cloning is increasingly embedded in real-world applications across industries such as customer service, gaming, education, and virtual reality. This technology enables the scaling of personalized and human-like interactions, allowing synthetic voices to replace or extend human presence, contributing to a broader shift toward the automation of communication and interaction.

Image: Gerd Altmann, on Pixabay.

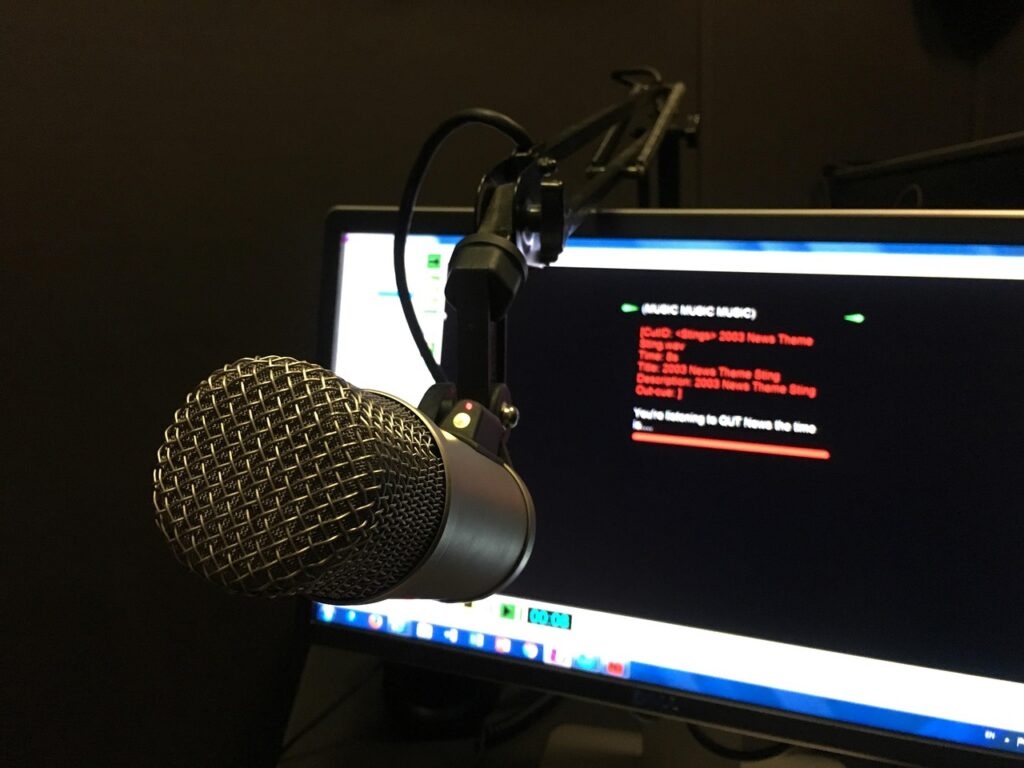

The review also emphasizes growing security risks in practical deployment, particularly the vulnerability of voice as a biometric identifier. As cloning becomes faster and more accessible, it enables real-time impersonation in phone calls and digital environments, facilitating fraud, social engineering, and identity theft. In this context, the concern extends beyond misuse to a more structural issue: the potential erosion of trust in audio as a reliable form of evidence. As a result, the development of detection systems capable of distinguishing human from AI-generated speech is an urgent need.

Finally, the review frames voice cloning as a regulatory and governance challenge, stressing that voice data should be treated as sensitive personal information. It calls for strengthened data protection measures, including explicit consent, limits on data use, and secure storage practices. The review argues that effective oversight will require coordination between industry leaders, policymakers, and ethicists, with regulatory frameworks prioritizing authorization, transparency, and accountability to ensure that the technology is deployed responsibly.

While this perspective focuses on applications and risks, other research examines how voice cloning interacts with perception, identity, and human experience. How does voice cloning affect listeners when the voice being synthesized belongs to someone recognizable, familiar, or personally significant?

Image: KELLEPICS, on Pixabay.

The article entitled “Voice conversion and cloning: psychological and ethical implications of intentionally synthesising familiar voice identities” was published in the Journal of the British Academy, in September 2025. The authors were a group of researchers from different universities, led by Carolyn McGettigan, Chair in Speech and Hearing Sciences at the University College London and a principal investigator in the Vocal Communication Laboratory.

In the article, McGettigan and co-authors describe a shift similar to the one reflected in Newton’s experience: voice cloning has become widely accessible, though still constrained by limitations in expressiveness, data quality, and performance across languages and accents. One reason for these limitations is that the human voice itself is a complex and dynamic signal shaped by anatomy, language, and context, making accurate replication a technically demanding task.

Voices vary not only between individuals but also within individuals depending on emotional state, social context, and communicative intent. As a result, cloning a voice convincingly requires not just reproducing its acoustic properties, but also capturing its variability across situations. The article concludes that current models often fall short in this regard, especially when training data is limited or biased. These limitations are particularly evident for underrepresented accents and identities, highlighting broader issues of inclusivity in AI systems.

The authors note that, from a psychological perspective, voices are powerful cues of identity and social meaning. Humans can recognize familiar voices with high accuracy and infer traits such as personality, trustworthiness, and competence even from very brief samples. Familiar voices also provide measurable cognitive and emotional benefits: they improve speech comprehension in noisy environments and can trigger physiological responses associated with bonding and stress reduction.

Because of these properties, synthetic voices, especially when they closely resemble real individuals, can evoke similar perceptual and emotional responses. This creates opportunities for beneficial applications but also raises concerns about how people interpret and respond to artificial agents that sound human.

Image: Gerd Altmann, on Pixabay.

The article cautions that the integration of human-like voices into machines can lead to anthropomorphism, where users attribute human qualities such as intelligence, intention, and emotion to artificial systems. This can enhance interaction but also create risks, including over trust, inappropriate emotional attachment, and even abusive behavior toward human-like agents.

These effects are amplified when the voice belongs to a familiar or specific individual. Moreover, the self-voice represents a special case: it is deeply tied to identity and perceived through both auditory and bodily mechanisms, leading to complex reactions when reproduced artificially. Individuals may accept variation in their own cloned voice or even adopt alternative vocal identities, but the psychological and identity-related implications of this remain underexplored by science.

McGettigan and co-authors conclude that voice cloning raises significant ethical, legal, and social questions. Issues of data ownership are central: while voice recordings are considered personal data, the status of a cloned voice as “owned” by an individual is philosophically and legally ambiguous. Regulatory efforts are emerging to prevent misuse, particularly in cases of impersonation and fraud, but protections remain uneven, especially regarding the use of a cloned voice after the speaker’s death. At the same time, biases in training data can lead to unequal representation and reinforce social inequalities.

Looking ahead, voice cloning may become part of broader systems that simulate full digital personas, including posthumous “digital afterlives.” While such applications may provide comfort or continuity, they also raise complex questions about consent, authenticity, and the emotional impact of interacting with lifelike representations of real people.

Image: AndyLeungHK, on Pixabay.

Voice cloning has rapidly evolved into practical technology capable of generating highly realistic speech from minimal audio input, enabling its use across a wide variety of applications. However, voices are not neutral signals: they convey identity, emotion, and social meaning, which means synthetic voices can shape perception and interaction in ways similar to human voices. At the same time, performance remains uneven, with constraints related to data quality, representation, and limited expressiveness.

This technology introduces serious legal and personal risks, as well as broader governance challenges. Studies point to the growing vulnerability of voice as a biometric identifier, with increasing potential for real-time impersonation, fraud, and misinformation. This underscores the need for detection systems capable of distinguishing synthetic from human speech. In parallel, voice data is increasingly treated as sensitive personal information, requiring clear standards around consent, storage, and use.

Together, these developments position voice cloning as both a scalable communication tool and a domain requiring structured oversight to address security, ethical, and regulatory concerns.

Craving more information? Check out these recommended TQR articles:

- Thinking in the Age of Machines: Global IQ Decline and the Rise of AI-Assisted Thinking

- Cleaning the Mirror: Increasing Concerns Over Data Quality, Distortion, and Decision-Making

- Quantum Governance: Can the Gap Between Technological Acceleration and Risk Management be Closed?

- Low Earth Orbit Is Becoming Structurally Unstable with Megaconstellations, Space Debris, and Governance Issues

- Digital Sovereignty: Cutting Dependence on Dominant Tech Companies

Enjoyed this? Help us improve.

Have we made any errors?

Spotted an error or want to contribute your expertise? We’d love to hear from you — reach us at info@thequantumrecord.com. The Quantum Record exists to bring researchers and curious minds together around science and technology that matters.