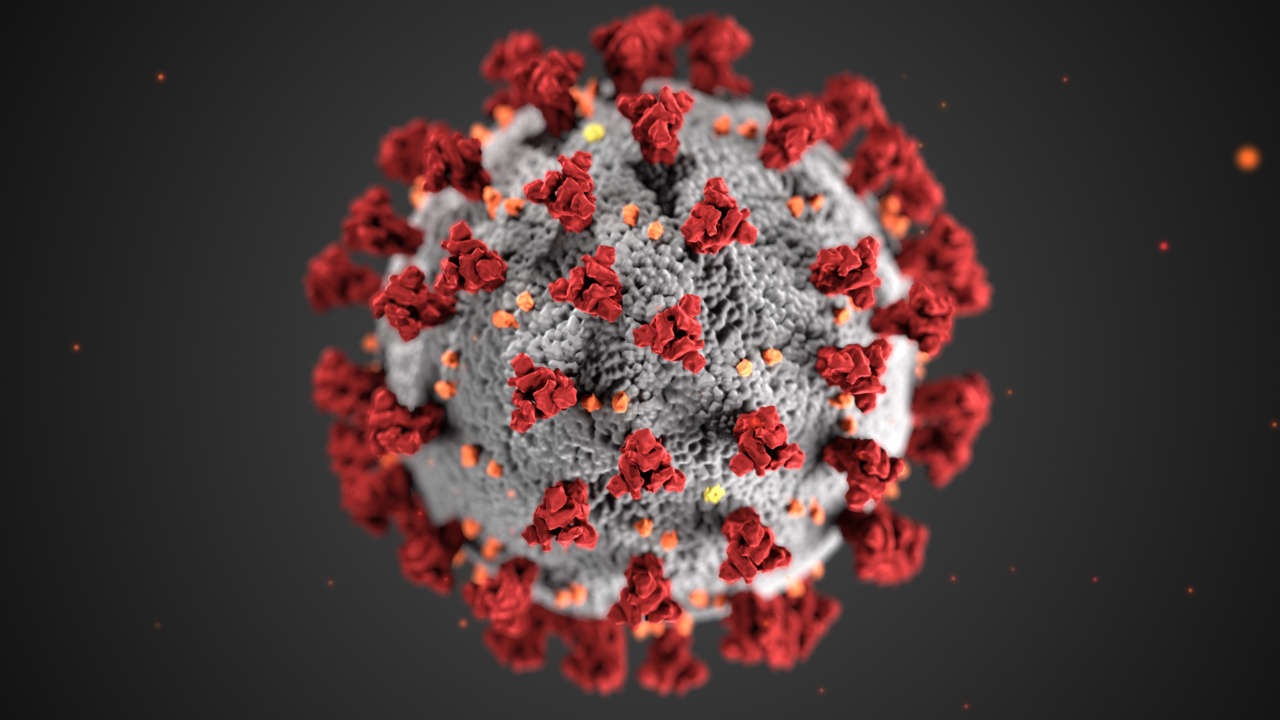

The SARS CoV-2 virus. Technology aided in the rapid development of Covid-19 vaccines and treatments, saving millions of lives.

By Mariana Meneses

AI is revolutionizing the way we respond to diseases.

AI is being used to automate the drug discovery process, develop new treatments, and improve the diagnosis and treatment of diseases. However, there are ethical concerns surrounding the use of AI in healthcare, such as the potential for bias and discrimination.

According to Forbes, the pharmaceutical industry is increasingly using AI and robotics to revolutionize drug discovery.

The integration of artificial intelligence and machine learning in the realm of pharmaceutical innovation has stirred significant expectations, and AI startups and technologies like AlphaFold, which is DeepMind’s AI system that predicts a protein’s 3D structure from its amino acid sequence with high accuracy, have been receiving much attention.

One noteworthy pioneer in this domain is Dr. Jiye Shi, who has merged computational design and automation platforms with AI-driven strategies. Of particular note is the case of Bimekizumab, a new AI-powered antibody designed using advanced machine learning techniques. It was approved to treat psoriasis in Europe, and exemplifies the remarkable potential of AI to expedite drug development.

Another example that could accelerate the development of new medical treatments is LabGenius, a company that has been using machine learning algorithms, automated robotic systems, and DNA sequencing to largely automate the antibody discovery process.

According to Wired Magazine, the algorithms design antibodies to target specific diseases, which the robotic systems build and grow in the lab, run tests, and feed the data back into the algorithms, all with limited human supervision. This approach allows for the exploration of potential antibodies much more quickly and effectively than humans ever could, given the huge number of variables that would require significant time to investigate.

The goal is to create new therapeutic antibodies that can differentiate between healthy and diseased cells, stick to the diseased cells, and then recruit immune cells to eliminate the cause of the disease. This process is much faster and more efficient than traditional methods of designing synthetic antibodies, which can be slow and labor-intensive for humans.

White blood cells (i.e. leucocytes) are cells of the immune system that protect the body against foreign invaders. Credit: National Cancer Institute.

According to The Guardian, the UK National Institute for Health and Care Excellence (NICE) has recommended the use of artificial intelligence (AI) to help U.K. clinicians perform radiotherapy.

The AI differentiates healthy cells from damaged cells by creating contours on digital images of CT or MRI scans, which will help to ensure that radiotherapy does not damage healthy cells. This replaces up to 80 minutes of manual labor by a radiographer, and by speeding the process of developing treatment plans will help to reduce waiting lists.

Until now, therapeutic radiographers, dosimetrists and others working in oncology outline healthy organs on digital images of a CT or MRI scan by hand so that the radiotherapy does not damage healthy cells by minimizing the dose to normal tissue. While it recommended using AI to mark the contours, NICE said that the contours would still be reviewed by a trained healthcare professional.

Another important development is cloud computing, which has revolutionized the healthcare industry by improving access to data, increasing scalability, and reducing costs, according to Medical Economics.

When everything is on the cloud, all data entry and storage processes become quicker, more accurate, and more organized. It allows medical facilities to reduce human error while conserving space otherwise required to store manual files.

It’s imperative that we seek transparency and accountability in AI-driven medical decisions. Image generated using Ideogram AI.

According to a Lancet paper by Hanzhou Li, from Emory University, and co-authors, large language models (LLMs) have the potential to aid clinicians in generating outlines of treatment options for diseases, but there are ethical concerns surrounding their use.

LLMs can aid in tasks such as clinical documentation, manuscript submissions, and patient-friendly communication. However, ethical issues in the use of LLMs include hidden biases and the disruption of traditional notions of trust. The data used to train LLMs is biased towards high-income, English-speaking populations, which can limit the scope of discussion of different treatments that are either effective or common in other regions of the world.

There is also a concern that the use of LLMs could lead to a lack of transparency and accountability in medical research and practice.

For example, a clinician in Africa using LLMs to generate a presentation of treatment options for diabetes could lead to a focus on treatment paradigms that are mainly researched and applied in wealthier countries. These methods might not only require resources that are scarce in Africa, but also be based on studies that didn’t include diverse populations.

Or, what if AI is used to decide who gets organ transplants?

If the algorithm is programmed to consider only life expectancy, then marginalized individuals, who on average have lower life expectancy, could be discriminated against. These examples highlight the need for transparency and accountability in AI-driven medical decisions.

The remarkable strides made by AI in drug discovery, treatment optimization and diagnosis hold immense potential to transform lives and alleviate healthcare burdens.

However, as we embrace these technologies, it’s crucial to navigate the ethical terrain with vigilance. Ensuring fairness, transparency, and inclusivity should be our compass as we venture into this new frontier.

Want to expand your horizons? Explore these handpicked TQR articles:

- What If We Could Cure Cancer by Telling Cancer Cells to Get Better?

- Reversing the Ageing Process? New Discovery Points to The Body’s Relationship With Time

- New Understanding of How Cells Communicate May Supercharge Medical Advances

- Discovering the Human Code: the Most Complete Human Genome Ever Created

- CRISPR Technology: Editing the Genetic Code, From Plants to Humans